Yesterday, Tesla announced that from this point forward, all the vehicles it produces, including the new Model 3, will have the hardware necessary to drive themselves. While that means they'll be programmed to avoid cyclists in risky scenarios – definitely good news – we're also wondering if that means they could even be "bullied" by the two-wheeled fraternity.

“Self-driving vehicles will play a crucial role in improving transportation safety and accelerating the world’s transition to a sustainable future. Full autonomy will enable a Tesla to be substantially safer than a human driver, lower the financial cost of transportation for those who own a car and provide low-cost on-demand mobility for those who do not,” said Tesla CEO Elon Musk.

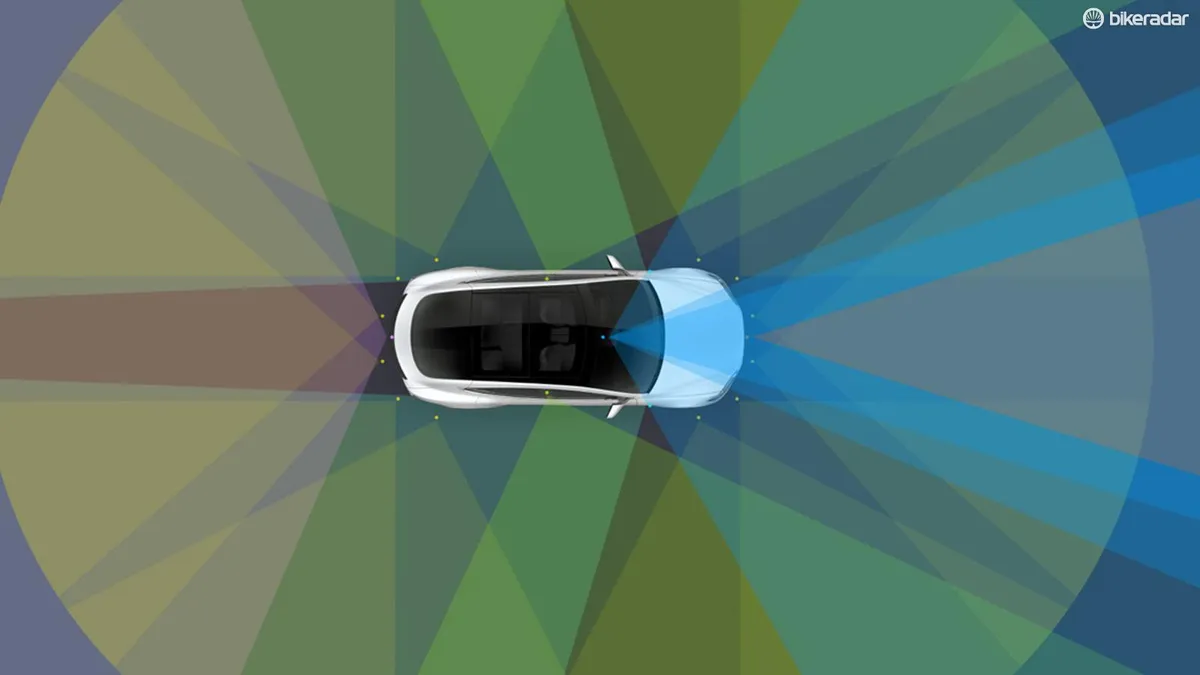

The electric automaker uses a system of eight cameras and 12 ultrasonic sensors to detect both hard and soft objects. (Do cyclists count as “soft” objects?)

To process all this data, the vehicles use a new on-board computer that is claimed to have 40 times the computing power of previous versions.

According to Tesla, while vehicles purchased today will have the hardware necessary for autonomous operation, there’s additional software development and real-world testing that must be done before the vehicles can be truly self-driving.

Tesla claims that full autonomy is safer than a human driver, a recent report published by Google appears to support this claim. Google’s self-driving cars use sensors that are similar to those used by Tesla.

What this means for cyclists

According to the report, the vehicles in Google's autonomous fleet are able to identify and avoid scenarios that frequently cause collisions with cyclists.

Here are a few of the examples Google outlines in its report:

- Google’s cars have the ability to slow down and give a cyclist room to swerve when a driver in a parked car opens their door

- The vehicles are programed to give cyclists "buffer room” similar to the three or four-foot passing laws common in many regions

- On-board sensors can identify cyclists’ hand gestures that indicate a turn or a desire to take the lane

- When a cyclist takes the lane, the cars are programmed to react accordingly

- The software is able to identify many different types of bicycles, regardless of wheel size or color. It can also identify tandems and unicycles.

The question remains: are you more willing to trust an algorithm than an individual when your life is at stake?

How this could give cyclists the upper hand on the roads

What we're now wondering though, is whether that means cyclists can take advantage of a self-driving car's programming. If a rider knows that a car is programmed to automatically avoid collisions with cyclists, they could decide not to give way at a roundabout, or make risky moves like undertaking on a busy road.

In the latter scenario – provided the car's sensors could accurately and reliably detect the cyclist – the rider would be able to undertake a car turning left in the UK (or right in the US) without any adverse consequences for anyone except the car passenger, who'll find themselves delayed slightly.

We're definitely not saying this is a good thing: the potential risk of such moves, and the trust it requires, makes us shudder. But it would change the power balance considerably. What do you think?